At Bootlin, we regularly work on networking topics as part of our Linux kernel contributions and thus we decided to attend our very first Netdev conference this year in Montreal. With the recent evolution of the network subsystem and its drivers capabilities, the conference was a very good opportunity to stay up-to-date, thanks to lots of interesting sessions.

The speakers and the Netdev committee did an impressive job by offering such a great schedule and the recorded talks are already available on the Netdev Youtube channel. We particularly liked a few of those talks.

Distributed Switch Architecture – slides – video

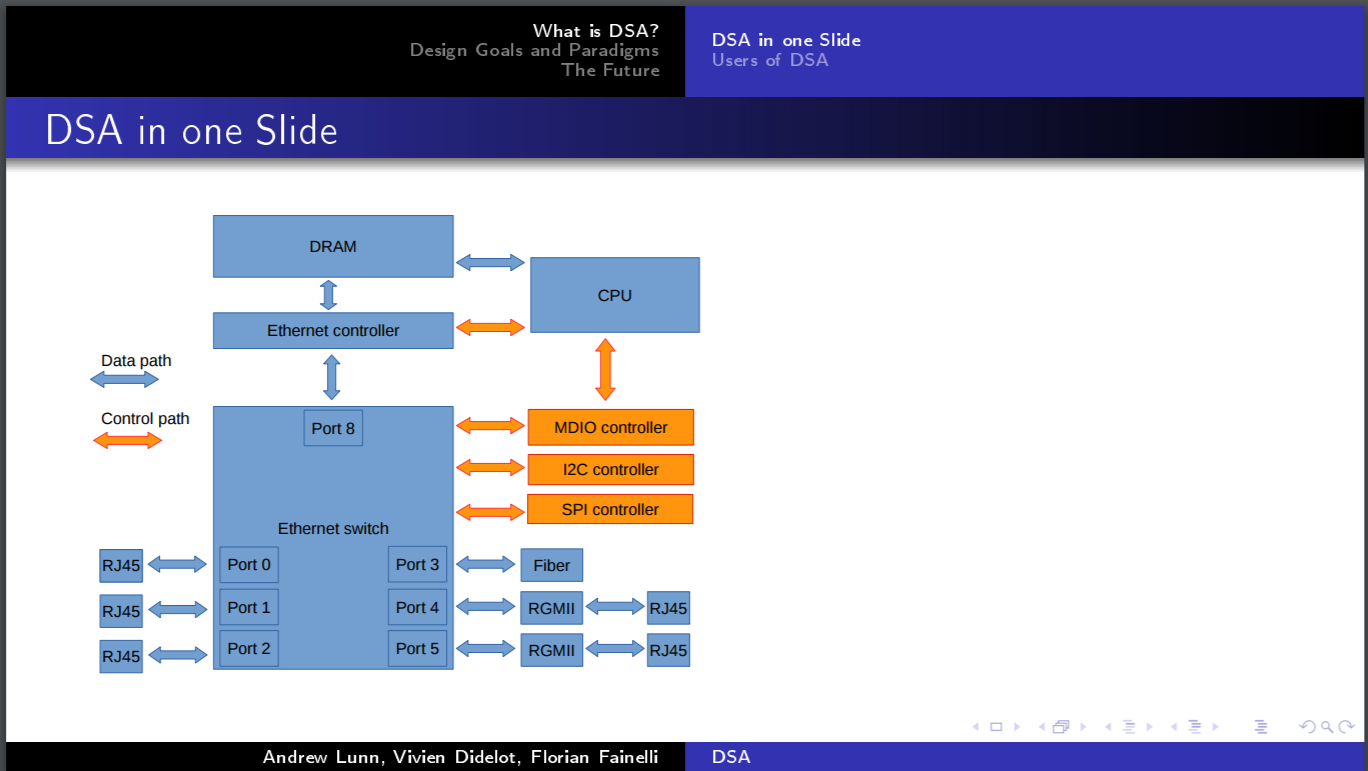

Andrew Lunn, Viven Didelot and Florian Fainelli presented DSA, the Distributed Switch Architecture, by giving an overview of what DSA is and by then presenting its design. They completed their talk by discussing the future of this subsystem.

The goal of the DSA subsystem is to support Ethernet switches connected to the CPU through an Ethernet controller. The distributed part comes from the possibility to have multiple switches connected together through dedicated ports. DSA was introduced nearly 10 years ago but was mostly quiet and only recently came back to life thanks to contributions made by the authors of this talk, its maintainers.

The main idea of DSA is to reuse the available internal representations and tools to describe and configure the switches. Ports are represented as Linux network interfaces to allow the userspace to configure them using common tools, the Linux bridging concept is used for interface bridging and the Linux bonding concept for port trunks. A switch handled by DSA is not seen as a special device with its own control interface but rather as an hardware accelerator for specific networking capabilities.

DSA has its own data plane where the switch ports are slave interfaces and the Ethernet controller connected to the SoC a master one. Tagging protocols are used to direct the frames to a specific port when coming from the SoC, as well as when received by the switch. For example, the RX path has an extra check after netif_receive_skb() so that if DSA is used, the frame can be tagged and reinjected into the network stack RX flow.

Finally, they talked about the relationship between DSA and Switchdev, and cross-chip configuration for interconnected switches. They also exposed the upcoming changes in DSA as well as long term goals.

Memory bottlenecks – slides

As part of the network performances workshop, Jesper Dangaard Brouer presented memory bottlenecks in the allocators caused by specific network workloads, and how to deal with them. The SLAB/SLUB baseline performances are found to be too slow, particularly when using XDP. A way from a driver to solve this issue is to implement a custom page recycling mechanism and that’s what all high-speed drivers do. He then displayed some data to show why this mechanism is needed when targeting the 10G network budget.

Jesper is working on a generic solution called page pool and sent a first RFC at the end of 2016. As mentioned in the cover letter, it’s still not ready for inclusion and was only sent for early reviews. He also made a small overview of his implementation.

DDOS countermeasures with XDP – slides #1, slides #2 – video #1, video #2

These two talks were given by Gilberto Bertin from Cloudflare and Martin Lau from Facebook. While they were not talking about device driver implementation or improvements in the network stack directly related to what we do at Bootlin, it was nice to see how XDP is used in production.

XDP, the eXpress Data Path, provides a programmable data path at the lowest point of the network stack by processing RX packets directly out of the drivers’ RX ring queues. It’s quite new and is an answer to lots of userspace based solutions such as DPDK. Gilberto andMartin showed excellent results, confirming the usefulness of XDP.

From a driver point of view, some changes are required to support it. RX hooks must be added as well as some API changes and the driver’s memory model often needs to be updated. So far, in v4.10, only a few drivers are supporting XDP.

XDP MythBusters – slides – video

David S. Miller, the maintainer of the Linux networking stack and drivers, did an interesting keynote about XDP and eBPF. The eXpress Data Path clearly was the hot topic of this Netdev 2.1 conference with lots of talks related to the concept and David did a good overview of what XDP is, its purposes, advantages and limitations. He also quickly covered eBPF, the extended Berkeley Packet Filters, which is used in XDP to filter packets.

This presentation was a comprehensive introduction to the concepts introduced by XDP and its different use cases.

Conclusion

Netdev 2.1 was an excellent experience for us. The conference was well organized, the single track format allowed us to see every session on the schedule, and meeting with attendees and speakers was easy. The content was highly technical and an excellent opportunity to stay up-to-date with the latest changes of the networking subsystem in the kernel. The conference hosted both talks about in-kernel topics and their use in userspace, which we think is a very good approach to not focus only on the kernel side but also to be aware of the users needs and their use cases.